12.29.2010.

Neuroph 2.5 with Neuroph Studio beta Released

Finally the version 2.5b is out! This release brings some exciting new features like:

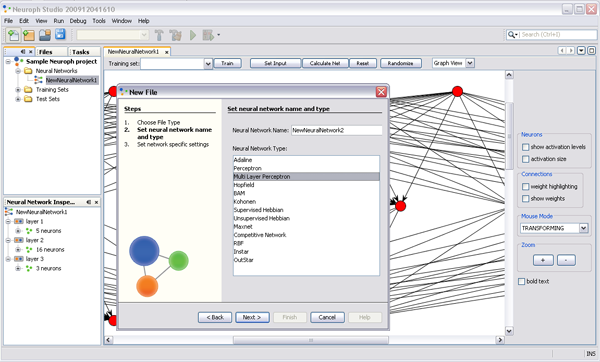

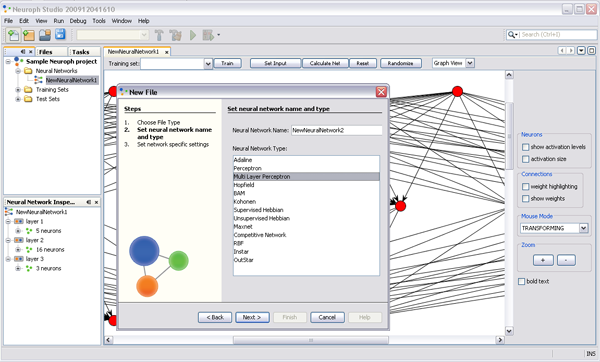

1. Neuroph Studio - new GUI based on NetBeans Platform

This new IDE-like GUI provides new experience in working with neural networks in Java. The wizard system makes creation of neural networks and training sets very intuitive, while project, properties and navigator views make easy inspection manipulation. Neuroph Studio also comes with integrated modules for Java development from NetBeans Java IDE. This means that you can develop neural networks, test and deploy them to your Java apps in same development environment!

Note that some features that were previously announced, like pallete and visual editor, are still not stablelised and not released in this beta release, but they should be available in final release.

2. Performance and algorithm optimizations

Performance improvment was one of the main goals for this release. We have improved performance at Java level by using lighter and faster data structures, and there are also very significant improvements of learning algorithms.

All these makes Neuroph 2.5 few times faster then previous releases, and we think that should resolve performance issues reported for previous versions. We also provided integration with high performance Encog neural network engine.

3. Integraton with Encog Engine

Encog Engine is high performance neural network library which provides support for advanced technologies like multi core and GPU (Graphical Processing Unit) processing, and some advanced learning rules like Resilient Propagation. Neuroph provides easy integration with Encog Engine using simple switch to turn on/off Encog Engine. What this means, is that you can use friendly, easy to understand Neuroph API for research and development, and then you can turn on hight performance Encog Engine for production environment. At the moment Encog Engine only supports Multi Layer Perceptrons with Resilient Backpropagation, but in future it will be extended for other architectures and learning rules.

4. C# Port

Thanks to our friends from Encog project, Neuroph has been ported to C#. C# port has not been releaset yet, but it is available on SVN repository.

BACKWARD COMPATIBILITY

This release breaks compatibility with previous versions of Neuroph, but that was unavoidable for introducing all improvements. However, these changes should be easy to apply for any existing code, and almost all of them are related to changeing Vectors to double arrays or ArrayList, and similar stuff.

CHANGELOG

1. Using ArrayLists and plain double arrays instead Vectors everywhere (much faster)

2. LMS - fixed the total network error formula - now using real MSE, so the number of iterations is reduced for all LMS based algorithms

3. Added batch mode support for backpropagation (in fact all LMS based algorithms)

4. Added training data buffer to weights class

5. Changed Connection class so now it contans references to 'from' and 'to' neurons. This reduces the number of connection instances to half number

needed before.

6. Fixes for Sigmoid and Tanh transfer functions to avoid NaN values

7. Removed learning rule constructors with NN parameter, sice it was making confusion. The way learning rule should be set to neural network is:

nnet.setLearningRule(new Backpropagation());

8. Adalie network modified in order to be the same as original theoretical model: bipolar inputs, bias, ramp transfer function.

These modifications provides more stable learning more stable learning.

9. Integration with Encog engine and support for flatten networks and Resilient Backpropagation from Encog.

It is turned off by default but it can be easily turned on with Neuroph.getInstance().flattenNetwork(true);

10. Other various bugfixes reported at forum and bug trackers

API CHANGES

There are lot of changes in API of Neuroph 2.5b but all of them are easy to apply to any existing code,

that used previous versions of Neuroph.

1. Using doule arrays instead Vector. In order to improve performance all Vectors are replaced with arrays and ArrayList.

Also removed boxing everywhere, so wherever Double was used, now there is double.

This applies to NeuralNetwork class and also TrainingSet.

2. Removed learning rule constructors with NeuralNetwork parameter, sice it was making confusion.

The way some learning rule should be set to neural network is:

nnet.setLearningRule(new Backpropagation());

3. TrainingSet class now supports interface used by Encog Engine

4. There is a switch to turn on or off Encog Engine support

org.neuroph.nnet.Neuroph.getInstance().setFlattenNetworks();

5. In SupervisedLearning the method updateTotalNetworkError(double[] patternError) is now deprecated. Instead that method we are now using

two methods:

abstract protected void updatePatternError(double[] patternErrorVector);

abstract protected void updateTotalNetworkError();

DOWNLOADS

Downloads for this release are available at our downloads page

|