01.11.2011.

Neuroph 2.6 Released

The version 2.6 has been released!

This release brings great new features:

1. Android support for image recognition plugin (finaly fixed, we've provided android compatibility layer)

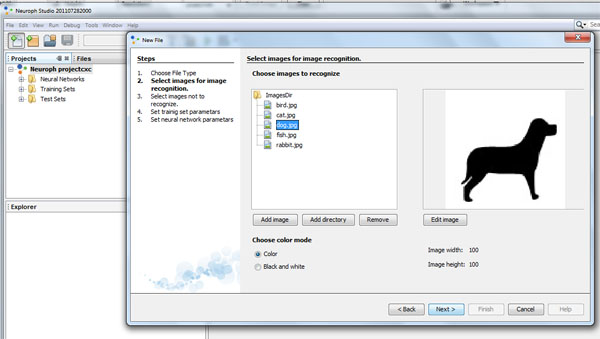

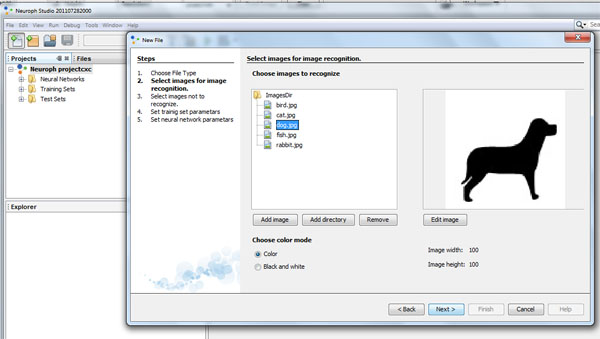

2. Improved image recognition wizard in Neuroph Studio (much easier to use, more comfortable to work with)

3. Data normlization support (Max, MaxMin, DecimalScale) - normalization with just one method call like you're used to in Neuroph

4. Weight randomization techniques (Range, Distort, Gaussian, NguyenWidrow)

5. Input and output adapters for reading and writing data to files, url, databases or stream

6. Simple microbenchmarking framework - to measure, compare and improve implementation performance

7. JUnit tests for the core package (thanks to Shivanth)!

8. New learning rules: Resilient Propagation, Anti Hebbian Learning, Generalized Hebbian Learning

Resilient propagation is one of the most advanced and efficent learning rules, from the backpropagation family. Thanks to Borislav Markov, and Jeff Heaton from Encog for the flat spot fix!

There are also some changes in framework structure:

1. TrainingSet class now use generics to specify type of training elements (unsupervised or supervised)

2. Attribute value in Weight class is made public in order to avoid too many getters and setters, make code more readable, and improve performane

3. To avoid confusion, method learnInSameThread is deprecated, and should not be used. Instead this method, the method learn should be used.

As of this version easyNeurons GUI is not supported anymore, and Neuroph Studio should be used instead.

DOWNLOADS

Downloads for this release are available at our downloads page

|